Note

Access to this page requires authorization. You can try signing in or changing directories.

Access to this page requires authorization. You can try changing directories.

These features and Azure Databricks platform improvements were released in August 2022.

Note

The release date and content listed below only corresponds to actual deployment of the Azure Public Cloud in most case.

It provide the evolution history of Azure Databricks service on Azure Public Cloud for your reference that may not be suitable for Azure operated by 21Vianet.

Note

Releases are staged. Your Azure Databricks account may not be updated until a week or more after the initial release date.

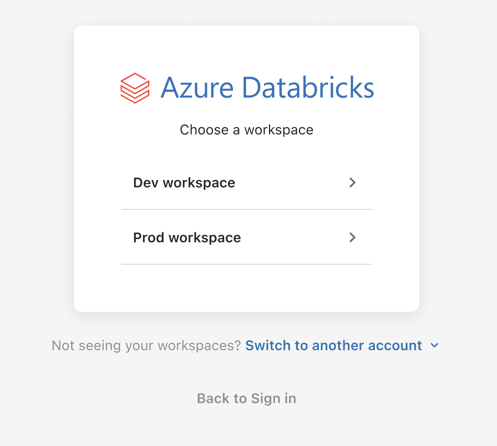

Account users can access the account console

August 1-31, 2022

Account users can access the Azure Databricks account console to view a list of their workspaces. Account users can only view workspaces that they have been granted access to. See Manage your Azure Databricks account.

Users from workspaces are synced automatically to your account as account users. All existing workspace users and service principals are synced automatically to your account as account-level users and service principals. See How do admins assign users to the account?.

Databricks ODBC driver 2.6.26

August 29, 2022

We have released version 2.6.26 of the Databricks ODBC driver (download). This release updates query support. You can now asynchronously cancel queries on HTTP connections upon API request.

This release also resolves the following issue:

- When using custom queries in Spotfire, the connector becomes unresponsive.

Databricks JDBC driver 2.6.29

August 29, 2022

We have released version 2.6.29 of the Databricks JDBC driver (download). This release resolves the following issues:

- When using an HTTP proxy with Cloud Fetch enabled, the connector does not return large data set results.

- Minor text issues in Databricks License Text. Documentation links were missing.

- The JAR file names were incorrect. Instead of SparkJDBC41.jar it should have been DatabricksJDBC41.jar. Instead of SparkJDBC42.jar, it should have been DatabricksJDBC42.jar.

Databricks Feature Store client now available on PyPI

August 26, 2022

The Feature Store client is now available on PyPI. The client requires Databricks Runtime 9.1 LTS or above, and can be installed using:

%pip install databricks-feature-store

The client is already packaged with Databricks Runtime for Machine Learning 9.1 LTS and above.

The client cannot be run outside of Databricks; however, you can install it locally to aid in unit testing and for additional IDE support (for example, autocompletion). For more information, see Databricks Feature Store Python client

Unity Catalog is GA

August 25, 2022

Unity Catalog is generally available. For detailed feature announcements and limitations, see Unity Catalog GA release note.

Delta Sharing is GA

August 25, 2022

Delta Sharing is now generally available, beginning with Databricks Runtime 11.1. For details, see What is Delta Sharing?.

- Databricks-to-Databricks Delta Sharing is fully managed without the need for exchanging tokens.

- Create and manage providers, recipients, and shares with a simple-to-use UI.

- Create and manage providers, recipients, and shares with SQL and REST APIs with full CLI and Terraform support.

- Query changes to data, or share incremental versions with Change Data Feeds.

- Restrict recipient access to downloading credential files or querying data using IP access lists and region restrictions.

- Using Delta Sharing to share data within the same Azure Databricks account is enabled by default.

- Enforce separation of duties by delegating management of Delta Sharing to non-admins.

Databricks Runtime 11.2 (Beta)

August 23, 2022

Databricks Runtime 11.2, 11.2 Photon, and 11.2 ML are now available as Beta releases.

See the full release notes at Databricks Runtime 11.2 (EoS) and Databricks Runtime 11.2 for Machine Learning (EoS).

Reduced message volume in the Delta Live Tables UI for continuous pipelines

August 22-29, 2022: Version 3.79

With this release, the state transitions for live tables in a Delta Live Tables continuous pipeline are displayed in the UI only until the tables enter the running state. Any transitions related to successful recomputation of the tables are not displayed in the UI, but are available in the Delta Live Tables event log at the METRICS level. Any transitions to failure states are still displayed in the UI. Previously, all state transitions were displayed in the UI for live tables. This change reduces the volume of pipeline events displayed in the UI and makes it easier to find important messages for your pipelines. To learn more about querying the event log, see What is the Delta Live Tables event log?.

Easier cluster configuration for your Delta Live Tables pipelines

August 22-29, 2022: Version 3.79

You can now select a cluster mode, either autoscaling or fixed size, directly in the Delta Live Tables UI when you create a pipeline. Previously, configuring an autoscaling cluster required changes to the pipeline's JSON settings. For more information on creating a pipeline and the new Cluster mode setting, see Run an update on a Delta Live Tables pipeline.

Identity federation is GA

August 25, 2022

Identity federation simplifies Azure Databricks administration by enabling you to assign account-level users, service principals, and groups to identity-federated workspaces. You can now configure and manage all of your users, service principals, and groups once in the account console, rather than repeating configuration separately in each workspace. To learn more about identity federation, see How do admins assign users to workspaces?. To get started, see How do admins enable identity federation on a workspace?.

Additional data type support for Databricks Feature Store automatic feature lookup

August 22-29, 2022: Version 3.79

Databricks Feature Store now supports BooleanType for automatic feature lookup.

Bring your own key: Encrypt Git credentials

August 23-29, 2022

You can use an encryption key for Git credentials for Databricks Repos.

See Bring your own key: Encrypt Git credentials.

Cluster UI preview and access mode replaces security mode

August 19, 2022

The new Create Cluster UI is in Preview. See Compute configuration reference.

Unity Catalog limitations (Public Preview)

August 16, 2022

- Scala, R, and workloads using the Machine Learning Runtime are supported only on clusters using the single user access mode. Workloads in these languages do not support the use of dynamic views for row-level or column-level security.

- Shallow clones are not supported when using Unity Catalog as the source or target of the clone.

- Bucketing is not supported for Unity Catalog tables. Commands trying to create a bucketed table in Unity Catalog will throw an exception.

- Overwrite mode for DataFrame write operations into Unity Catalog is supported only for Delta tables, not for other file formats. The user must have the

CREATEprivilege on the parent schema and must be the owner of the existing object. - Streaming currently has the following limitations:

- It is not supported in clusters using shared access mode. For streaming workloads, you must use single user access mode.

- Asynchronous checkpointing is not yet supported.

- Streaming queries lasting more than 30 days on all puporse or jobs clusters will throw an exception. For long running streaming queries, configure automatic job retries.

- Referencing Unity Catalog tables from Delta Live Tables pipelines is currently not supported.

- Groups previously created in a workspace cannot be used in Unity Catalog GRANT statements. This is to ensure a consistent view of groups that can span across workspaces. To use groups in GRANT statements, create your groups in the account console and update any automation for principal or group management (such as SCIM, Okta and Microsoft Entra ID connectors, and Terraform) to reference account endpoints instead of workspace endpoints.

Improved workspace search is now GA

August 9, 2022

You can now search for notebooks, libraries, folders, files, and repos by name. You can also search for content within a notebook and see a preview of the matching content. Search results can be filtered by type. See Search for workspace objects.

Use generated columns when you create Delta Live Tables datasets

August 8-15, 2022: Version 3.78

You can now use generated columns when you define tables in your Delta Live Tables pipelines. Generated columns are supported by the Delta Live Tables Python and SQL interfaces.

Improved editing for notebooks with Monaco-based editor (Experimental)

August 8-15, 2022

A new Monaco-based code editor is available for Python notebooks. To enable it, check the option Turn on the new notebook editor on the Editor settings tab on the User Settings page.

The new editor includes parameter type hints, object inspection on hover, code folding, multi-cursor support, column (box) selection, and side-by-side diffs in the notebook version history.

Databricks Runtime 10.3 series support ends

August 2, 2022

Support for Databricks Runtime 10.3 and Databricks Runtime 10.3 for Machine Learning ended on August 2. See Databricks support lifecycles.

Enable private connectivity with Azure Private Link (Public Preview)

August 2, 2022

Azure Databricks now supports enabling Azure Private Link connections for private connectivity between users and their Azure Databricks workspaces, and also between clusters on the compute plane and the core services on the control plane within the Databricks workspace infrastructure. Azure Private Link connects to services directly without exposing the traffic to the public network. This feature is available as a Public Preview. See Enable Azure Private Link back-end and front-end connections.

Delta Live Tables now supports refreshing only selected tables in pipeline updates

August 2-24, 2022

You can now start an update for only selected tables in a Delta Live Tables pipeline. This feature accelerates testing of pipelines and resolution of errors by allowing you to start a pipeline update that refreshes only selected tables. To learn how to start an update of only selected tables, see Run an update on a Delta Live Tables pipeline.

Job execution now waits for cluster libraries to finish installing

August 1, 2022

When a cluster is starting, your Databricks jobs now wait for cluster libraries to complete installation before executing. Previously, job runs would wait for libraries to install on all-purpose clusters only if they were specified as a dependent library for the job. For more information on configuring dependent libraries for tasks, see Configure and edit Databricks tasks.